Why Building an AI “Mission Control” Sounds Cool (But Gets Messy Fast)

The super prompt myth meets reality: AI agent platforms still require real engineering, cleanup and repeated refinement.

The Promise of AI Mission Control

There has been a lot of noise lately around AI agents, autonomous workflows and what some people like calling “AI teams”. The idea is seductive — spin up multiple agents, assign each one a role, let them reason together and somehow you now have a kind of mission control platform running tasks for you.

I’ll admit, I’ve been drawn into that idea myself.

Partly because it is genuinely interesting, and partly because if you work in engineering or automation, the thought of stitching together a research agent, coding agent, validation agent and reporting agent into one orchestrated system sounds too compelling not to try.

And yes, tools like OpenClaw, Hermes and earlier experiments like AutoGPT have pushed that vision surprisingly far.

On paper, it looks powerful.

In demos, it looks even better.

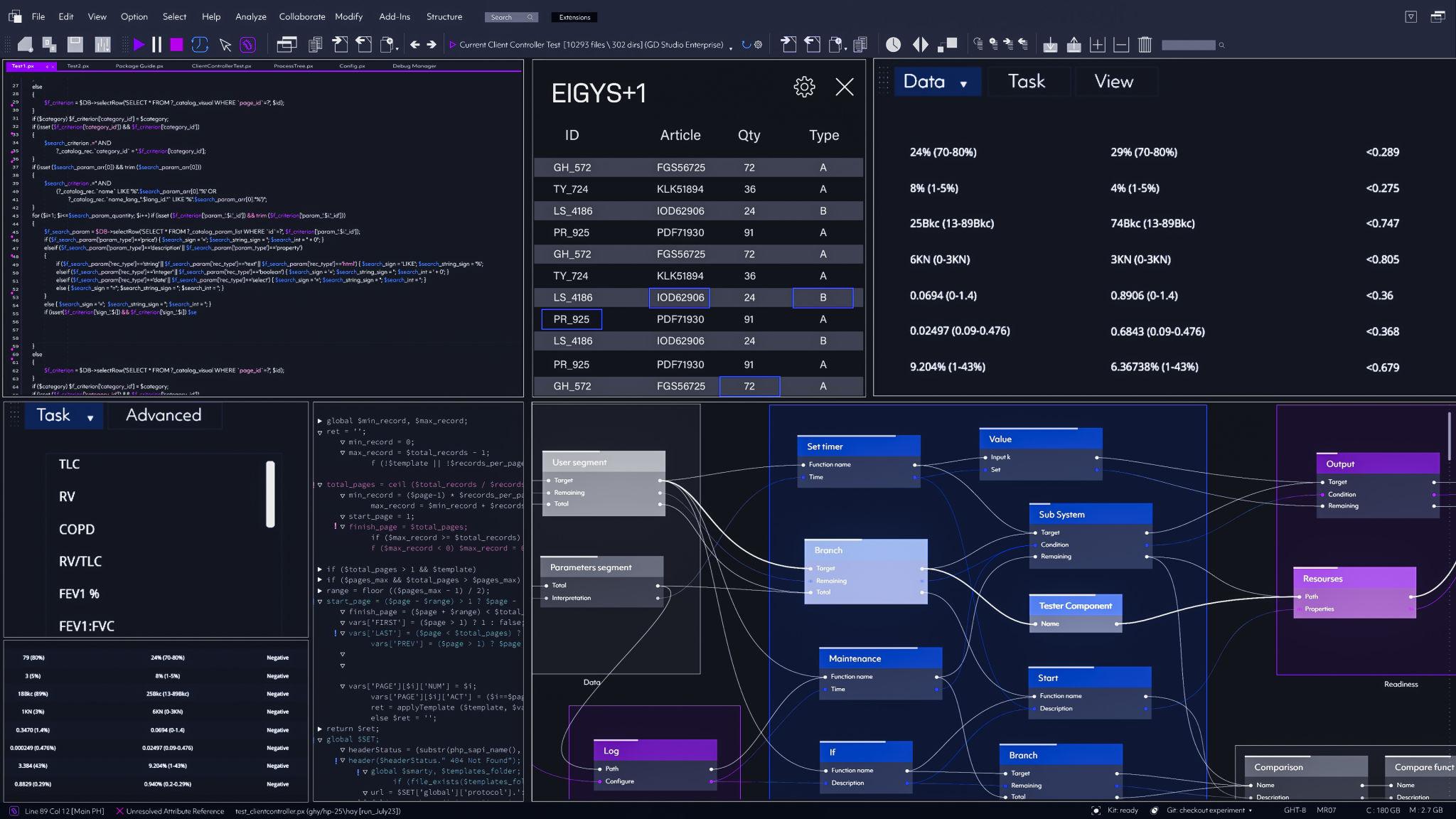

You see agent swarms, dashboards, autonomous loops, and suddenly it feels like software development is moving toward AI-operated mission control centers.

Naturally, I wanted to test how much of that was real.

Could I use multiple models, wire them behind agent frameworks, and build something practical?

In some ways, this experimentation also builds on a thought I wrote about earlier — that sometimes the instinct to build another tool is itself worth questioning. In that earlier piece, I argued that not every operational problem needs a new layer of tooling. This agentic “mission control” idea feels like the next evolution of that same question: what happens when we don’t just build another tool, but try to build a system of tools that can reason with each other?

That was partly what pushed me down this rabbit hole.

The answer is yes.

But also—not in the effortless way the hype suggests.

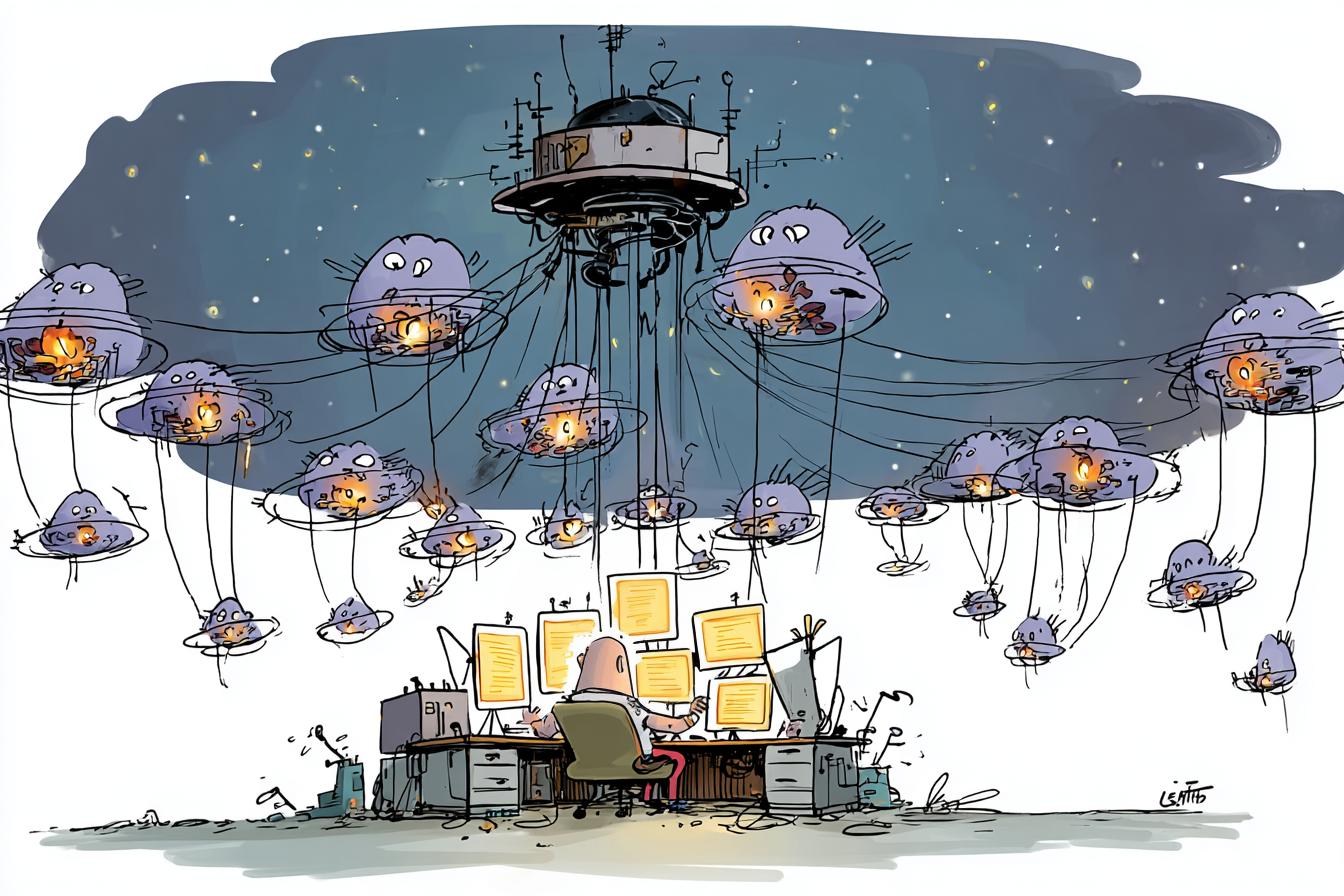

There’s a myth I keep seeing repeated online around the idea of a “super prompt”, as though there exists one magical prompt capable of generating an entire polished multi-agent platform.

Personally, I think that is overhyped.

A super prompt is usually just many trial-and-error prompts collapsed into one giant instruction set. There is already hidden engineering inside it.

And even then, it doesn’t remove iteration.

That part matters.

Because many people market the output.

They rarely show the mess behind getting there.

Where It Gets Messy Fast

Mixing models, to be fair, is not really the issue. In fact, I think mixing models can be powerful and sometimes even necessary. It is fairly normal to use one model for planning, another for coding, and perhaps another for validation or cleanup. I’ve done this myself — using one model to reason through architecture, another to generate code, and then bringing in a different model again when things start breaking. On paper, this seems like a strength. You are effectively using the best of different systems.

But this is also where complications begin.

What becomes obvious after enough experimentation is that each model carries its own assumptions about how software should be structured. Even when they solve the same problem, they often solve it differently. One may favor modularity and separation of concerns. Another may generate large monolithic chunks of logic. Some over-engineer abstractions that were never needed, while others simplify too aggressively and miss important structure. None of this is necessarily wrong in isolation, but once these outputs begin to mix inside a single project, maintaining coherence starts becoming difficult.

That is the part most demos never really show.

At first the generated output often looks surprisingly convincing. You get folders, scripts, maybe even something resembling a working application scaffold. It feels productive. But once you begin iterating — and iteration always comes — the project starts drifting. You ask one model to fix something another model produced, and the fix introduces a new inconsistency. You ask another model to help patch that issue, and perhaps it improves one component while quietly restructuring another. Over time, what looked like progress starts feeling more like negotiation between competing coding opinions.

That is usually when the development process stops feeling linear.

Instead of moving from idea to implementation in a clean progression, you enter a cycle of prompting, generating, fixing, re-prompting and restructuring. The challenge is not merely debugging errors. The harder problem is that the project gradually loses internal consistency. File structures become harder to reason about, logic starts living in unexpected places, dependencies become messy, and suddenly what should have been a straightforward prototype starts behaving like something stitched together rather than designed.

I think this is where many people eventually hit a reality check.

At some point, you stop asking models to repair the system and you inspect the codebase yourself. That is often where things become obvious very quickly. You begin noticing mismatched patterns, questionable mappings, strange routing decisions, components doing too much, or business logic placed where it should never have been. The issue is no longer whether the code runs. It becomes whether the system makes sense.

And that is a much harder problem.

Broken code can often be repaired relatively quickly. Messy architecture takes much longer to untangle. That distinction, for me, was probably one of the biggest lessons from experimenting with agentic development. LLMs can be remarkably good at generating software fragments, but generation alone is not the same as coherent system design.

That gap matters much more than people think.

My Honest Take After Experimenting

To be clear, none of this means I think agentic AI or mission control style platforms are nonsense. Quite the opposite. I actually think there is enormous potential here, and I’m still actively exploring it. The ability to orchestrate research, coding, validation and automation through agents is genuinely compelling, especially for engineering-heavy workflows where repeatable processes matter.

What has changed for me is not whether I believe in the idea, but how I interpret the hype around it.

I’ve become much more skeptical of claims that these systems are close to effortless autonomy. That framing often hides how much manual intervention still exists underneath. In practice, the hard work tends to move rather than disappear. Instead of writing everything from scratch, you may spend your effort refining prompts, validating outputs, restructuring code, forcing consistency back into a drifting system, and repeatedly correcting assumptions introduced by the models themselves.

That is still engineering.

Just a different flavor of it.

And I think that is an important distinction, especially for people coming into this space thinking AI removes the need for technical rigor. In many ways, it does the opposite. It makes structure, architectural thinking and debugging discipline even more important, because generated systems can look complete before they are actually coherent.

That has probably been my biggest personal takeaway.

AI can absolutely help accelerate exploration. It can help prototype faster, surface ideas faster, and even lower the barrier for people who are not strong coders. I have no doubt about that. But acceleration is not the same as reliability, and scaffolding is not the same as a maintainable system.

Those things still have to be engineered.

And maybe that is why I keep coming back to the same conclusion: AI can help you build a platform, but it does not remove the responsibility of understanding the platform. Sooner or later, you still need to make sense of what has been produced, especially if you expect it to evolve into something real.

That is where the fantasy often meets reality.

And honestly, that reality is not necessarily disappointing — it is simply less magical and more grounded than people make it sound.

For me, that makes the space even more interesting, not less.

Because once you stop looking at agentic AI as “autonomous magic” and start treating it as a new engineering medium, the conversation becomes much more honest.

And much more useful.